Agent Opus

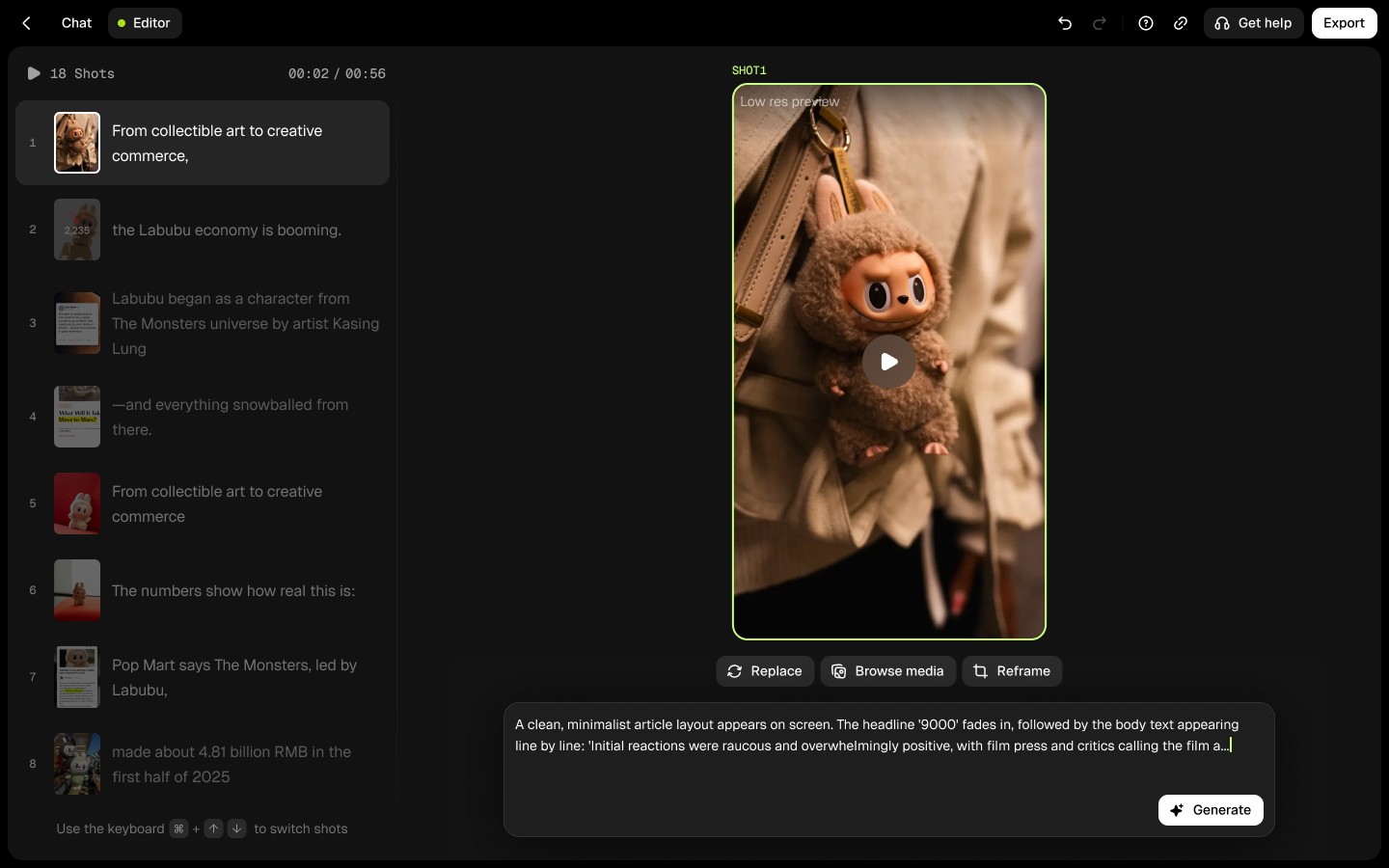

Designing prompt editing experiences for an AI video platform, including inpainting, asset replacement, and keyframe regeneration across six content patterns. Improved retention rate by 38% and publish rate by 29% in most recent Beta release. Ongoing, currently iterating through prototyping and usability testing.

AI Video Agent

Prompt Editing

context

To make AI-powered video editing intuitive for creators

Generating a video is only half the experience, users need to edit and refine the output before publishing. I joined to design the prompt editing experience across six content patterns, with the goal of increasing editor adoption and boosting publish rates for the upcoming Beta release.

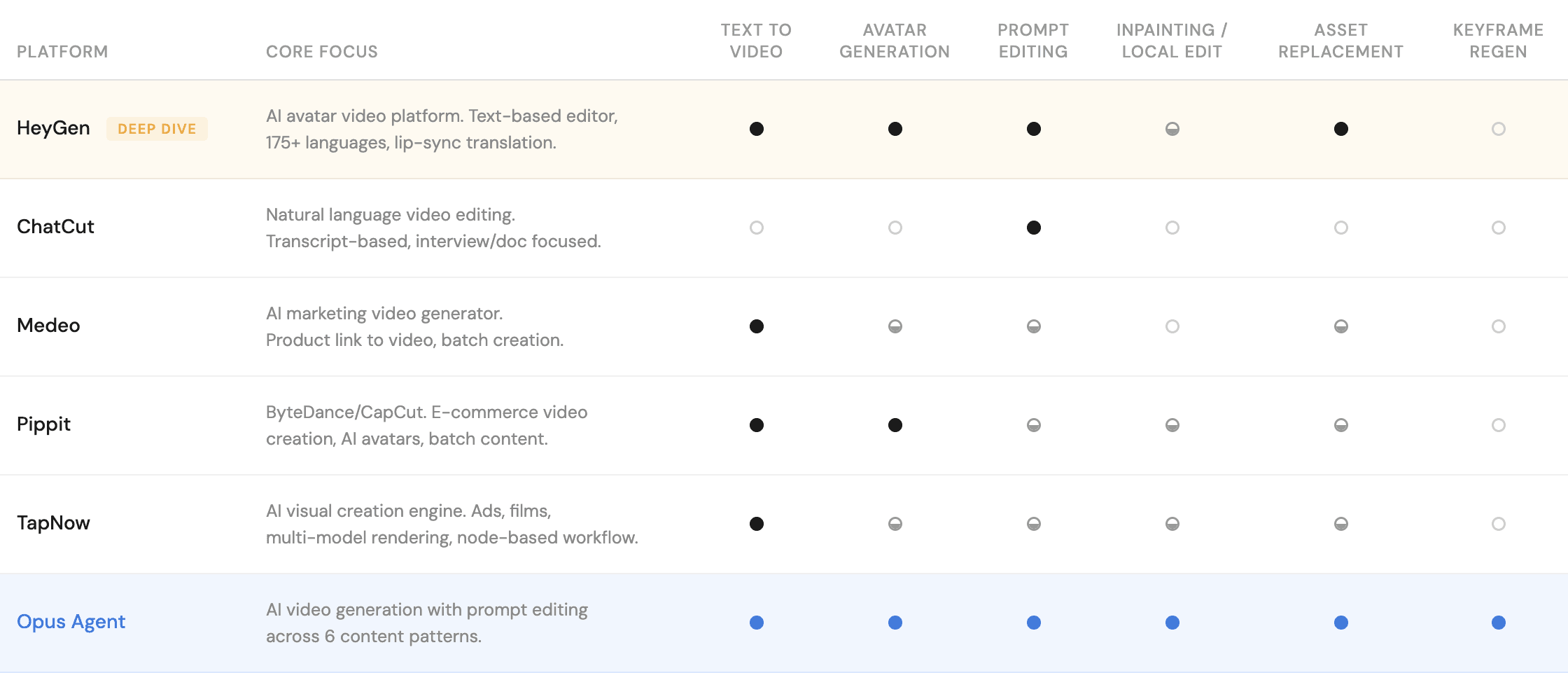

Competitive Analysis

How others handle AI editing

USABILITY TESTING

What are the existing problems in the Beta

We ran usability tests on the newest Beta release and uncovered specific friction points in the editing flow.

Users struggled with not knowing what was editable, unclear feedback from the AI, getting lost between content patterns. These findings directly reshaped the next Beta scope and prioritized.

NEXT STEPS

What's ahead

I'm continuing to explore prompt editing interaction patterns across content types, with a focus on making the AI's editable boundaries more visible and giving users more granular control over generation outputs.